The need of major financial institutions to measure their risk started in 1970s after an increase in financial instability. Baumol (1963) first attempted to estimate the risk that financial institutions faced. He proposed a measure based on standard deviation adjusted to a confidence level parameter that reflects the user’s attitude to risk. However, this measure is not different from the widely known Value-at-Risk (VaR), which refers to a portfolio's worst outcome that is likely to occur at a given confidence level. According to the Basle Committee, the VaR methodology can be used by financial institutions to calculate capital charges in respect of their financial risk.

Since JP Morgan made available its RiskMetrics system on the Internet in 1994, the popularity of VaR and with it the debate among researchers about the validity of the underlying statistical assumptions increased. This is because VaR is essentially a point estimate of the tails of the empirical distribution. The free accessibility of the RiskMetrics and the plethora of available datasets triggered academics and practitioners to find the best-performing risk management technique. However, even now, the results are conflicting and confusing.

Giot and Laurent (2003a) calculated the VaR number for long and short equity trading positions and proposed the APARCHi model with skewed Student-t conditionally distributed innovations (APARCH-skT) as it had the best overall performance in terms of the proportion of failure test. In a similar study, Giot and Laurent (2003b) suggested the same model to the risk managers to estimate the VaR number for six commodities, even if a simpler model (ARCH-skT) generated accurate VaR forecasts. Huang and Lin (2004) argued that for the Taiwan Stock Index Futures, the APARCH model under the normal (Student-t) distribution must be used by risk managers to calculate the VaR number at the lower (higher) confidence level.

Although the APARCH model comprises several volatility specifications, its superiority has not been proved by all researchers. Angelidis and Degiannakis (2005) opined that “a risk manager must employ different volatility techniques in order to forecast accurately the VaR for long and short trading positions”, whereas Angelidis et al. (2004) considered that “the Arch structure that produces the most accurate VaR forecasts is different for every portfolio”. Furthermore, Guermat and Harris (2002) applied an exponentially weighted likelihood model in three equity portfolios (US, UK, and Japan) and proved its superiority to the GARCH model under the normal and the Student-t distributions in terms of two backtesting measures (unconditional and conditional coverage). Moreover, Degiannakis (2004) studied the forecasting performance of various risk models to estimate the one-day-ahead realized volatility and the daily VaR. He proposed the fractional integrated APARCH model with skewed Student-t conditionally distributed innovations (FIAPARCH-skT) that efficiently captures the main characteristics of the empirical distribution. Focusing only on the VaR forecasts, So and Yu (2006) argued, on the other hand, that it was more important to model the fat tailed underlying distribution than the fractional integration of the volatility process. The two papers, one by Degiannakis (2004) and the other by So and Yu (2006), among many others, highlight that different volatility techniques are applied for different purposes.

Contrary to the contention of the previous authors, including Mittnik and Paolella (2000), that the most flexible models generate the most accurate VaR forecasts, Brooks and Persand (2003) pointed out that the simplest ones, such as the historical average of the variance or the autoregressive volatility model, achieve an appropriate out-of-sample coverage rate. Similarly, Bams et al. (2005) argued that complex (simple) tail models often lead to overestimation (underestimation) of the VaR.

VaR, however, has been criticized on two grounds. On the one hand, Taleb (1997) and Hoppe (1999) argued that the underlying statistical assumptions are violated because they could not capture many features of the financial markets (e.g. intelligent agents). Under the same framework, many researchers (see for example Beder, 1995 and Angelidis et al., 2004) showed that different risk management techniques produced different VaR forecasts and therefore, these risk estimates might be imprecise. Last, but not least, the standard VaR measure presumes that asset returns are normally distributed, whereas it is widely documented that they really exhibit non-zero skewness and excess kurtosis and, hence, the VaR measure either underestimates or overestimates the true risk.

On the other hand, even if VaR is useful for financial institutions to understand the risk they face, it is now widely believed that VaR is not the best risk measure. Artzner et al. (1997, 1999) showed that it was not necessarily sub-additive, i.e., the VaR of a portfolio may be greater than the sum of individual VaRs and therefore, managing risk by using it may fail to automatically stimulate diversification. Moreover, it does not indicate the size of the potential loss, given that this loss exceeds the VaR. To remedy these shortcomings, Delbaen (2002) and Artzner et al. (1997) introduced the Expected Shortfall (ES) risk measure, which equals the expected value of the loss, given that a VaR violation occurred. Furthermore, Basak and Shapiro (2001) suggested an alternative risk management procedure, namely limited expected losses based risk management (LEL-RM), that focuses on the expected loss also when (and if) losses occur. They substantiated that the proposed procedure generates losses lower than what VaR-based risk management techniques generate.

ES is the most attractive coherent riskii measure and has been studied by many authors (see Acerbi et al. 2001; Acerbi, 2002; and Inui and Kijima, 2005). Yamai and Yoshiba (2005) compared the two measures—VaR and ES—and argued that VaR is not reliable during market turmoil as it can mislead rational investors, whereas ES can be a better choice overall. However, they pointed out that gains on efficient management by using the ES measure are substantial whenever its estimation is accurate. In other cases, they advise the market practitioners to combine the two measures for best results.

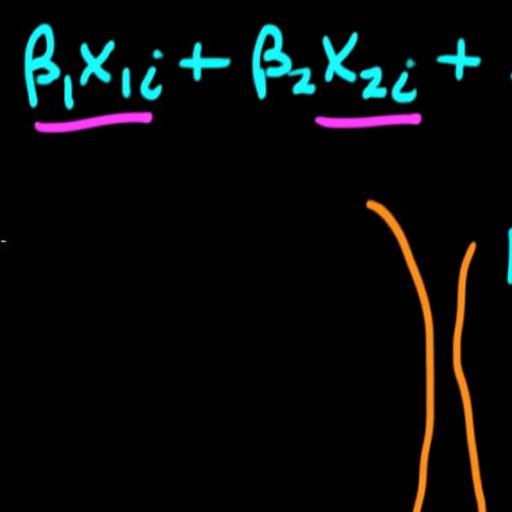

Our study sheds light on the issue of volatility forecasting under risk management environment and on the evaluation procedure of various risk models. It compares the performances of the most well known risk management techniques for different markets (stock exchanges, commodities, and exchange rates) and trading positions. Specifically, it estimates the VaR and the ES by using 11 ARCH volatility specifications under four distributional assumptions, namely normal, Student-t, skewed Student-t, and generalized error distribution. We investigated 44 models following a two-stage backtesting procedure to assess the forecasting power of each volatility technique and to select one model for each financial market. In the first stage, to test the statistical accuracy of the models in the VaR context, we examined whether the average number of violations is statistically equal to the expected one and whether these violations are independently distributed. In the second stage, we employed standard forecast evaluation methods by comparing the returns of a portfolio, whenever a violation occurs with the ES forecast.

The results of this paper are important for many reasons. VaR summarizes the risk exposure of the investor in just one number, and therefore portfolio managers can interpret it quite easilyiii. Yet, it is not the most attractive risk measure. On the other hand, ES is a coherent risk measure and hence its utility in evaluating the risk models can be rewarding. Currently, however, most researchers judge the models only by calculating the average number of violations. Moreover, even if the risk managers hold both long and short trading positions to hedge their portfolios, most of the research has been applied only on long positions. Therefore, it is possible to investigate if a model can capture the characteristics of both tails simultaneously.

This study, to best of our knowledge, is the first that estimates the VaR and ES numbersiv for three different markets simultaneously and therefore, we can infer if these markets share common features in risk management framework. Therefore, we combined the most well-known and concurrent parametric models with four distributional assumptions to find out which model has the best overall performance. Even though we did not include all ARCH specifications available in the literature, we estimated the models that captured the most important characteristics of the financial time series and those that were already used or were extensions of specifications that were implemented in similar studies. Finally, we employed a two-stage procedure to investigate the forecasting power of each volatility technique and to guide on VaR model selection process. Following this procedure, we could select a risk model that predicts the VaR number accurately and minimizes, if a VaR violation occurs, the difference between the realized and the expected losses In contrast to this, earlier research focused mainly on the unconditional coverage of the models.

To summarize, this study juxtaposes the performance of the most well-known parametric techniques, and shows that under the proposed backtesting procedure, for each financial market, there is a small set of models that accurately estimate the VaR number for both long and short trading positions and two confidence levels. Moreover, contrary to the findings of the previous research, the more flexible models do not necessarily generate the most accurate risk forecasts, as a simpler specification can be selected regarding two dimensions: (a) distributional assumption and (b) volatility specification. For distributional assumption, standard normal or GED is the most appropriate choice depending on the financial asset, trading position, and confidence level. Besides the distributional choice, asymmetric volatility specifications perform better than symmetric ones, and in most cases, fractional integrated parameterization of volatility process is necessary.